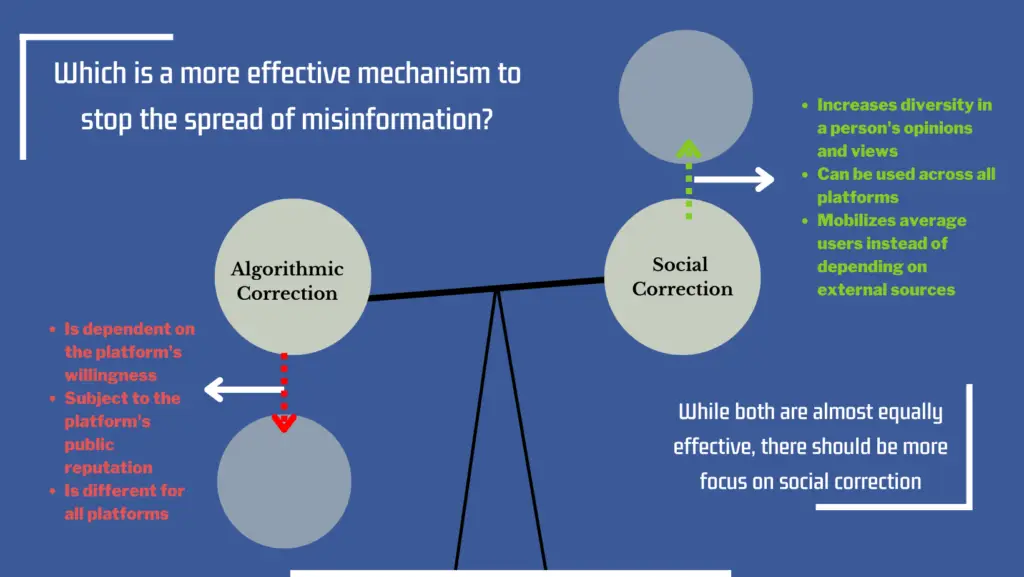

Through this study we aim to answer pressing questions about the most effective way for mitigating misinformation: is it algorithmic correction, meaning the ‘related stories’ feature that facebook uses, or is it social correction, meaning the replies and the comments under the posts. These approaches are based on contradictory ideas- where people’s automation bias leads them to over accept computers’ results and less in humans and the contrary where social media forms weak ties between people due to which there is absence of emotional intensity and there is more diversity of ideas and opinion that people are exposed to. We also study whether Conspiracy ideation i.e. people’s tendency to believe conspiracies, might make it harder to mitigate misinformation. We found that both approaches were almost equally effective, even in the case of conspiracy ideation, with social correction doing only slightly better.

While the difference is marginal, we suggest that the focus should be more on social correction at least for emerging health issues, because algorithmic correction is based on the company’s willingness to reduce the spread of misinformation and differs from platform to platform- for instance, twitter and reddit do not have a ‘related stories’ feature. Moreover, trust in algorithms is tied to trust in the company- one piece of bad news can negatively affect algorithmic correction efforts. Moreover, using social correction makes it possible for social media users to engage with opinions and ideas beyond what they believe in- making them more informed.